Frameworks

AI did not create the assessment problem. It exposed a structural flaw that had been there all along: we confused finished work with visible thinking.

The Artifact Assumption

For much of modern schooling, learning has been evaluated through an arrangement so familiar that its logic is rarely questioned. Students produce an artifact—an essay, a discussion response, a presentation, a report—and the artifact is taken as evidence that thinking occurred. The finished work stands in for the cognitive activity that produced it.

This arrangement has always been partly symbolic. Educators rarely witness the entire thinking process that unfolds as students interpret texts, compare ideas, or revise arguments. Instead, the final product functions as a trace of that invisible work. We infer reasoning from structure. We infer comprehension from coherence. We infer understanding from the appearance of organized thought.

In this sense, the artifact has long served as a proxy for cognition.

Artificial intelligence did not create this arrangement. What it has done—rapidly and somewhat uncomfortably—is reveal how fragile the arrangement always was. When a machine can generate a convincing artifact, the artifact itself ceases to guarantee anything about the thinking behind it.

An essay can now appear without the reasoning that once produced it. A summary can be generated without comprehension. A discussion post can materialize without any discussion at all. The visible structure remains intact while the invisible cognitive work quietly disappears.

AI did not introduce a new educational problem. It illuminated a structural assumption that had long gone unexamined.

The Misplaced Question

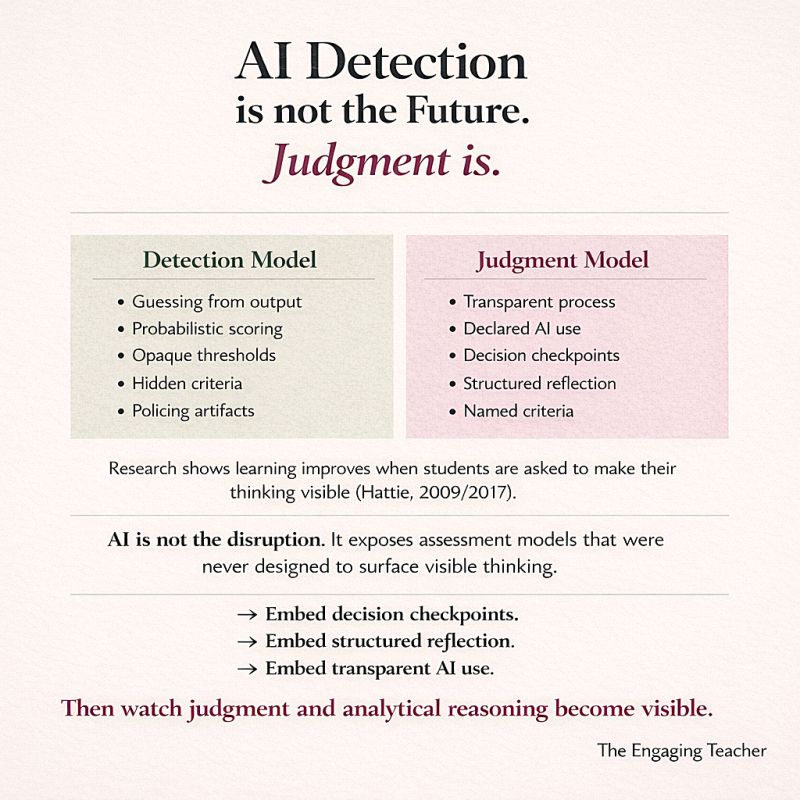

The most common institutional response to generative AI has been technological rather than pedagogical. If artificial intelligence can produce convincing student work, the reasoning goes, then the task becomes identifying whether AI was involved. Detection becomes the central problem.

This approach is understandable. It is also philosophically misplaced.

Detection presumes that the artifact should still function as the primary evidence of learning. If the artifact can be authenticated—if its authorship can be verified—then the existing assessment structure might remain intact.

But the deeper problem is not authorship. The deeper problem is epistemological.

The artifact was never direct evidence of thinking. It was only an inference.

Detection attempts to restore the credibility of the inference rather than reconsider the design of the evidence itself.

A more productive question begins elsewhere:

Where, within a learning task, does student judgment become visible?

Judgment occupies a different epistemic category than production. A polished paragraph can be generated automatically. A justification for why one argument was rejected in favor of another cannot easily be fabricated after the fact. The reasoning that accompanies a decision belongs to the individual who made it.

When instructional design surfaces those decisions, the artifact becomes less important. The thinking becomes observable in its own right.

The Visibility Problem

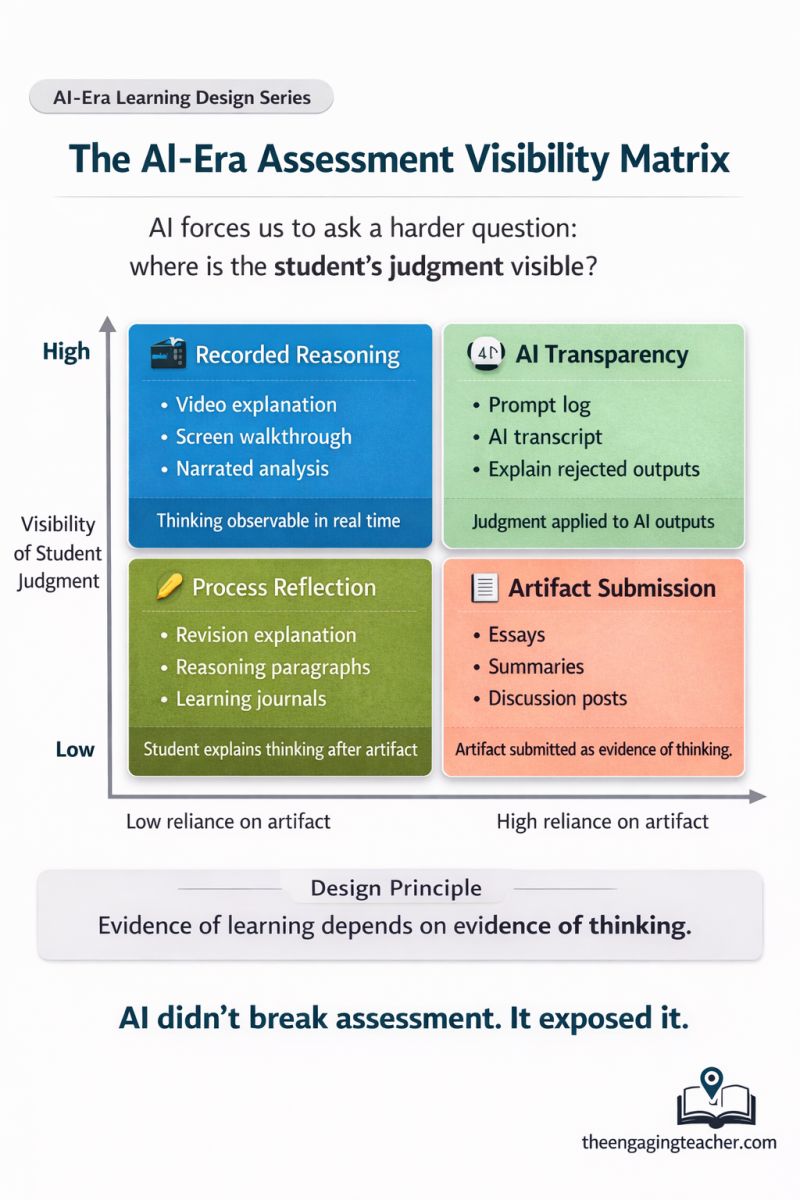

The tension exposed by AI is not primarily technological; it is structural. Many traditional assignments emphasize the final artifact while leaving the reasoning that produced it largely invisible. The finished work arrives neatly packaged, but the cognitive activity that generated it remains hidden.

This arrangement functioned reasonably well when producing articulate work required sustained effort. Writing itself imposed friction. The difficulty of constructing coherent prose served as a rough proxy for intellectual engagement.

Generative AI removes that friction almost instantly.

The artifact can now appear without the struggle that once accompanied it. What disappears in that transition is not merely effort but the epistemic trace of learning itself.

When learning tasks rely primarily on the finished product, automation becomes trivial. When tasks require students to explain their reasoning—how they evaluated alternatives, revised interpretations, or justified choices—the intellectual work becomes visible again.

The difference is not technological. It is architectural.

The AI Learning Trap

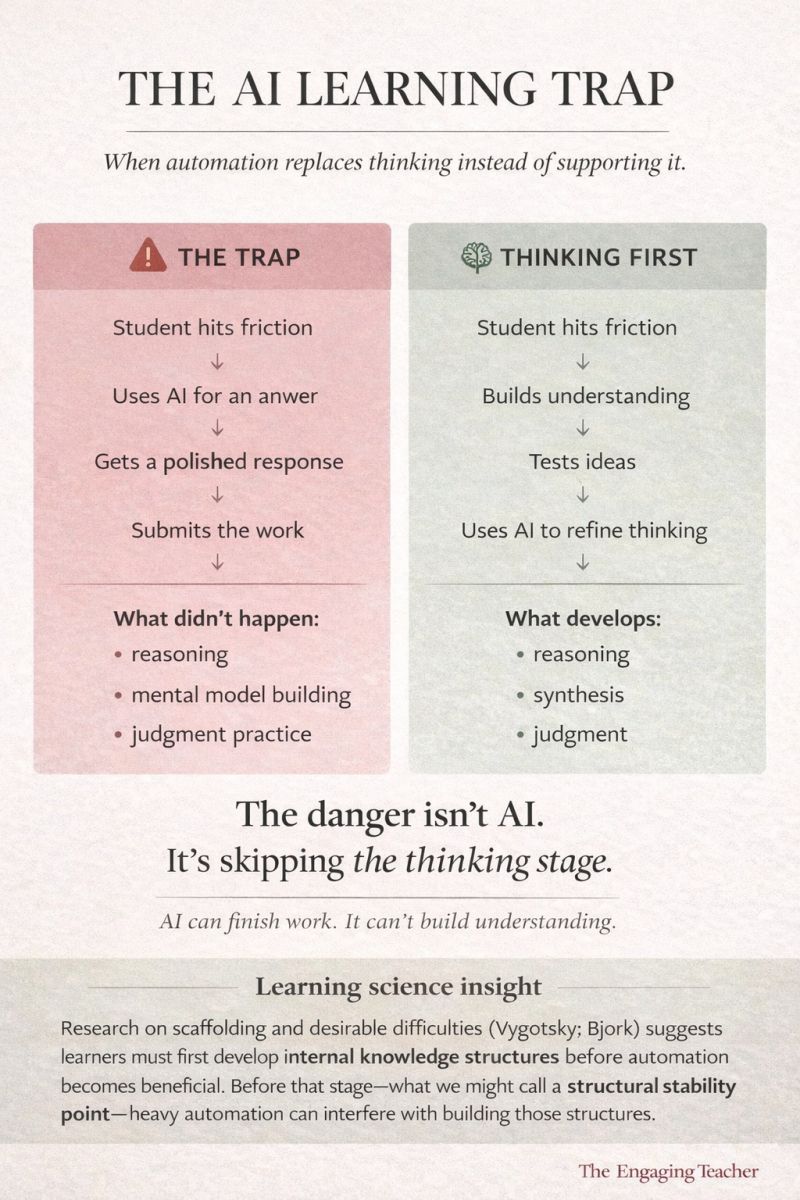

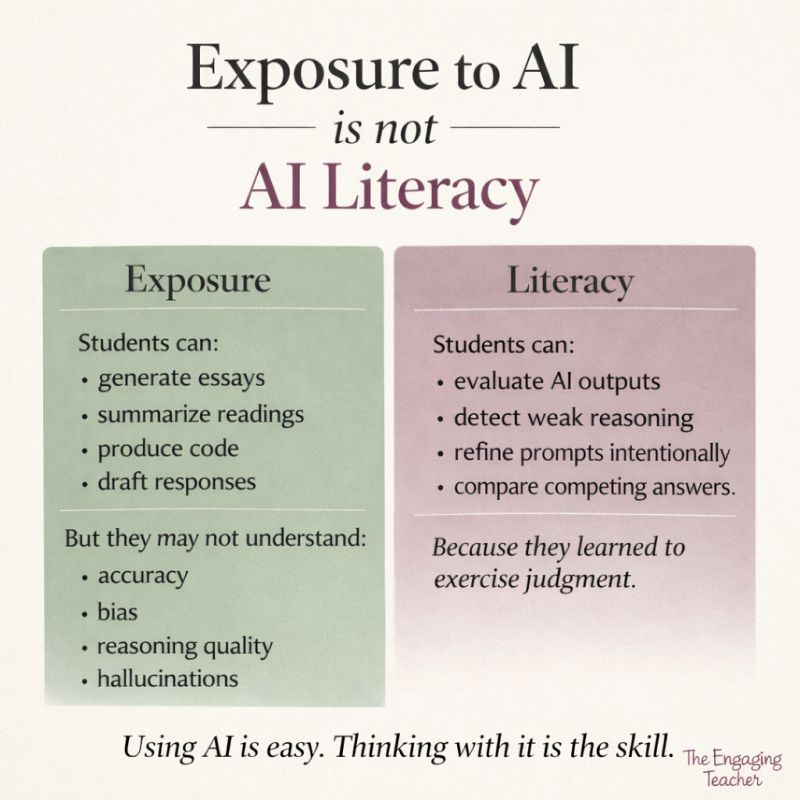

The most significant educational risk created by generative AI is not plagiarism. It is the quiet disappearance of cognitive struggle.

Students encounter difficulty. A system produces a polished response instantly. The response appears plausible. The assignment is submitted.

From the outside, nothing looks unusual. The structure of the work appears sound. The argument flows smoothly. The artifact fulfills the requirements of the task.

But the learning process that normally unfolds between confusion and understanding never occurred.

Educational psychology has repeatedly demonstrated that learning depends on effortful cognitive engagement. Mental models develop when learners evaluate possibilities, confront uncertainty, revise interpretations, and reorganize their understanding.

Automation bypasses precisely this stage.

The assignment reaches completion. The cognitive work never begins.

Bloom’s Taxonomy Reconsidered

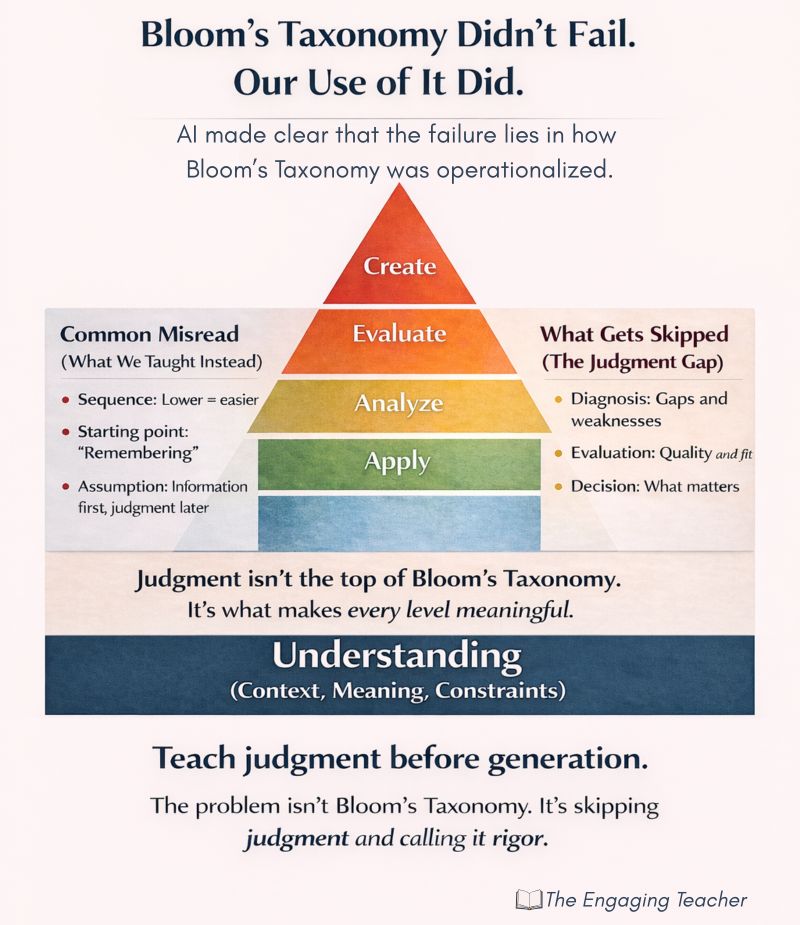

The renewed scrutiny of learning design has also revived criticism of Bloom’s Taxonomy. Some have interpreted the rise of AI as evidence that the taxonomy itself has become obsolete.

This interpretation misunderstands the role the taxonomy was intended to play.

Bloom’s framework does not prescribe a sequence of assignments. It categorizes forms of cognitive activity. The difficulty lies not in the taxonomy itself but in how educational practice often operationalized it.

In many classrooms, students were asked to produce complex artifacts before they had developed the evaluative judgment required to construct them meaningfully. Creation appeared at the top of the hierarchy but was treated as the beginning of instruction.

Generative AI makes that sequence unsustainable.

When production becomes effortless, the intellectual center of gravity shifts toward evaluation, comparison, interpretation, and justification. Judgment becomes the core activity through which understanding reveals itself.

Designing for Judgment

The most constructive response to AI does not lie in prohibiting the technology but in redesigning the architecture of learning tasks. When assignments require students to articulate the reasoning behind their decisions, the evidence of learning becomes visible again.

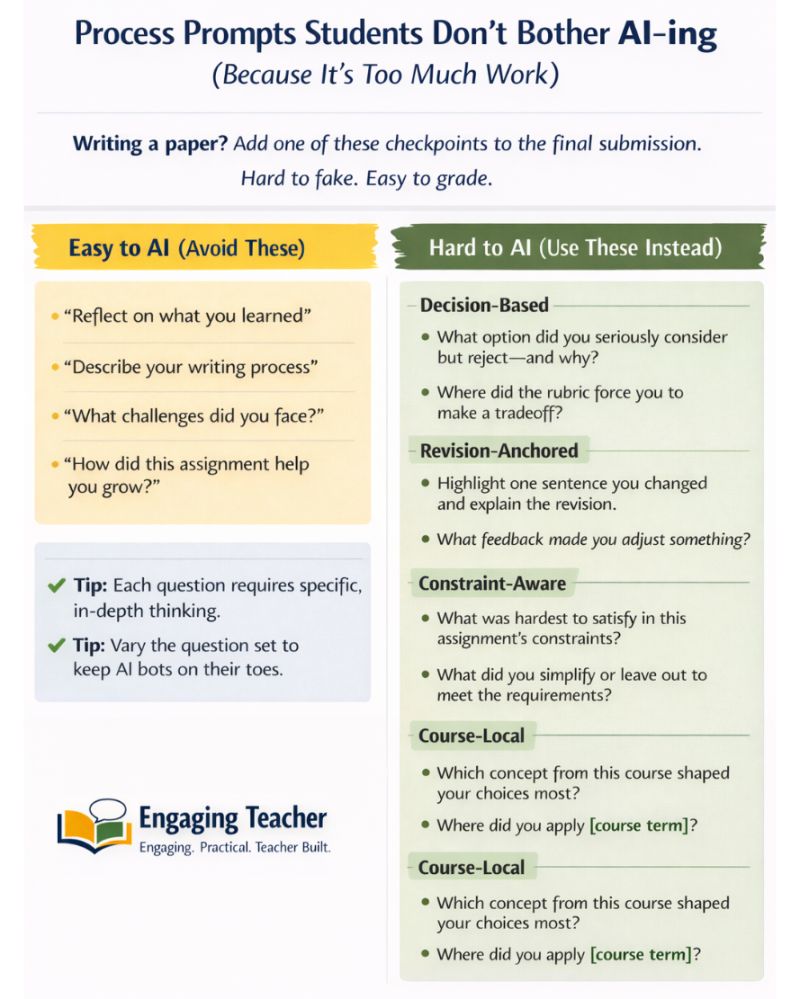

Questions that surface reasoning often appear deceptively simple:

- Which interpretation did you reject, and why?

- What tradeoff shaped your final decision?

- How did feedback alter your reasoning?

- What constraint influenced your conclusion most strongly?

These prompts do not attempt to eliminate AI from the learning process. Instead, they assume its presence while shifting the evidentiary focus toward the student’s own cognitive activity.

The Design Principle

The arrival of generative AI has not made learning impossible. It has simply clarified what learning requires.

When production becomes inexpensive, judgment becomes the intellectual work.

When judgment becomes the work, instructional design must reveal it.

Evidence of learning depends on evidence of thinking.

Artificial intelligence did not break assessment.

It exposed it.

References

Bloom, B. S. (1956). Taxonomy of Educational Objectives: The Classification of Educational Goals. Longmans.

Brown, P. C., Roediger, H. L., & McDaniel, M. A. (2014). Make It Stick: The Science of Successful Learning. Harvard University Press.

Chi, M. T. H., & Wylie, R. (2014). The ICAP framework: Linking cognitive engagement to active learning outcomes. Educational Psychologist.

Hattie, J. (2009). Visible Learning. Routledge.

Sweller, J., Ayres, P., & Kalyuga, S. (2011). Cognitive Load Theory. Springer.

Wiggins, G., & McTighe, J. (2005). Understanding by Design. ASCD.

Leave a comment