The Learning Foundations of AI Judgment

Rethinking Evidence of Learning in the Age of Generative AI

March 15, 2026

For much of modern education, we have operated on a quiet but powerful assumption: if the artifact is strong, the thinking behind it must have been strong as well.

Essays, research papers, discussion posts, reflections, and reports have long served as proxies for reasoning. When instructors encountered a polished piece of work—a coherent argument, a well-structured analysis, a confident explanation—we inferred that the intellectual labor required to produce that artifact had already taken place. The artifact functioned as a kind of footprint. If the print in the sand looked deep enough, we assumed the traveler who left it must have walked with intention. Generative AI complicates that assumption.

Today a well-structured artifact can appear with startling ease. A paragraph that once took a student twenty minutes of fumbling and revision can now emerge in seconds, fluent and grammatically pristine. The footprint is still there in the sand, but the traveler is harder to see.

This does not mean that learning has disappeared, nor does it mean that artifacts themselves have lost value. Writing, explaining, and constructing arguments remain essential intellectual practices. But it does mean that the artifact alone tells us less than it once did about the thinking that produced it.

Which raises a deeper design question for educators: What actually counts as evidence of learning?

The Artifact Assumption

For decades, much of assessment design has relied—sometimes implicitly—on what we might call the artifact assumption: that the quality of the final product reflects the quality of the reasoning behind it.

In many contexts, this assumption was practical and often reasonable. Producing a thoughtful essay or solving a complex problem typically required sustained cognitive effort. Even when students received help—from peers, tutors, or reference materials—the artifact still tended to bear the marks of their thinking process.

But generative AI changes the informational landscape around artifacts. When language models can generate essays, explanations, or summaries that appear structurally sound regardless of the student’s conceptual understanding, the relationship between artifact and reasoning becomes more ambiguous. The artifact may still exist. What becomes uncertain is whose reasoning it reflects.

This shift does not necessarily invalidate traditional assignments. But it does expose a structural limitation that was always present, though often hidden: artifacts do not inherently reveal the thinking that produced them. They only allow us to infer it. And inference, when separated from observable reasoning, is fragile.

Judgment Does Not Appear in a Vacuum

In response to these developments, many educators have emphasized the importance of student judgment. If AI can produce answers quickly, students must learn to evaluate those answers—to detect inaccuracies, identify missing assumptions, compare alternatives, and determine which responses are strongest. This emphasis is well placed. But judgment itself does not appear in a vacuum. It rests on deeper cognitive foundations.

A student cannot meaningfully evaluate an AI-generated explanation of photosynthesis without understanding the underlying biological processes. A student cannot assess the strength of an argument about economic policy without some conceptual framework for interpreting evidence and tradeoffs. Without that grounding, even flawed AI outputs can appear plausible, much like a convincing map to someone who has never seen the terrain. Judgment, in other words, emerges from the interaction of several forms of thinking.

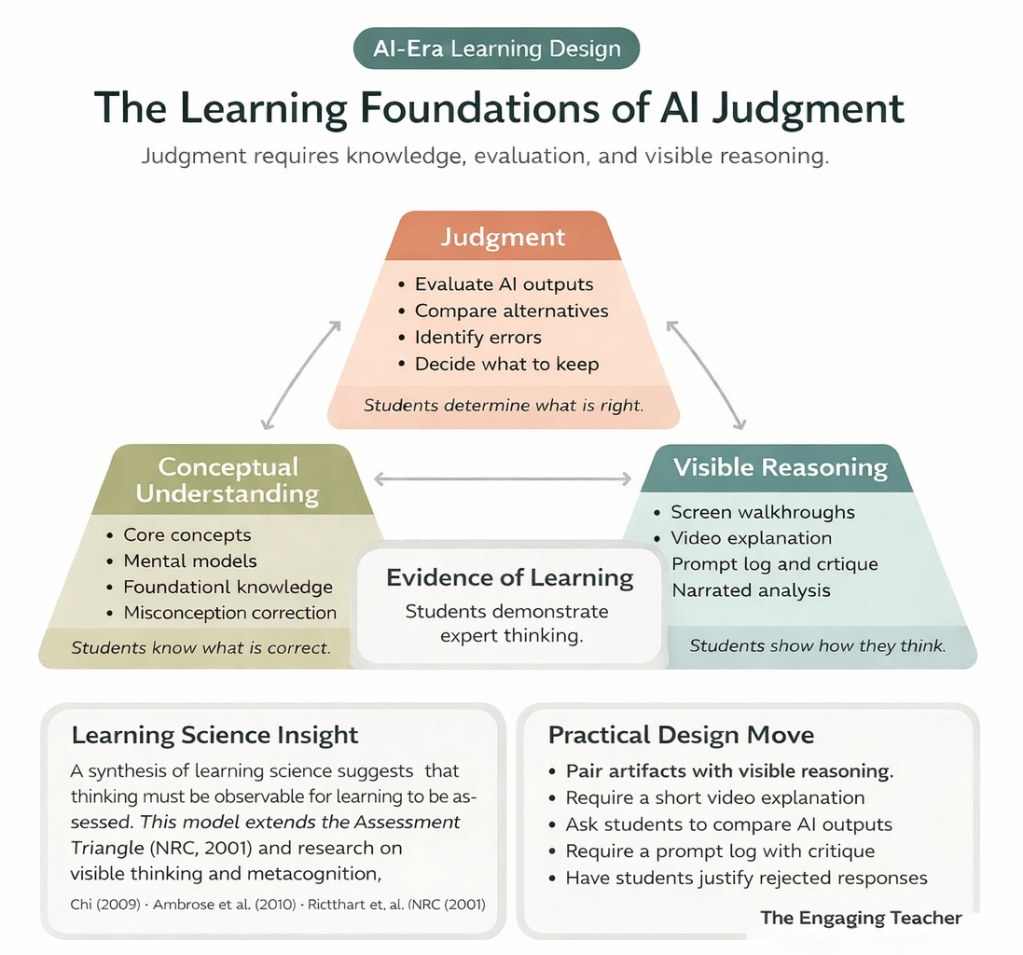

The model presented here proposes that evidence of learning becomes visible when three elements intersect:

- Conceptual understanding — students know what is correct

- Judgment — students determine what is right

- Visible reasoning — students show how they think

Conceptual understanding provides the intellectual landscape. It allows students to recognize accurate information, identify misconceptions, and situate ideas within the disciplinary logic of a field.

Judgment operates within that landscape. It is the capacity to compare alternatives, weigh competing claims, and decide which ideas hold under scrutiny.

Visible reasoning, finally, makes that decision-making process observable. Through explanation, reflection, comparison, or critique, students reveal how they move from knowledge to judgment.

When these elements appear together, instructors gain something far more meaningful than a polished artifact. They gain access to the structure of the student’s thinking. And that structure is what assessment ultimately seeks to understand.

Learning Science Foundations

The model described here does not emerge in isolation; it synthesizes several well-established strands of learning science.

One influential framework comes from the Assessment Triangle, articulated by the National Research Council (2001), which emphasizes the relationship between cognition, observation, and interpretation in assessment design. Effective assessment requires clarity about what students are meant to know (cognition), tasks that make that knowledge observable (observation), and careful reasoning about what the resulting evidence means (interpretation).

Complementing this perspective is a substantial body of research on visible thinking, explanation, and metacognition. Work on generative learning suggests that students deepen understanding when they actively construct explanations and articulate their reasoning processes (Chi, 2009). Similarly, research on learning environments highlights the role of metacognitive awareness in helping students monitor and refine their thinking (Ambrose et al., 2010). The Visible Thinking framework developed through Project Zero further demonstrates how structured routines that surface reasoning can make students’ thought processes more explicit and examinable (Ritchhart et al., 2011).

Across these traditions, a consistent insight emerges:

Learning becomes assessable when thinking becomes observable.

Generative AI has not introduced this principle. But by loosening the once-tight coupling between artifact and reasoning, it has made the visibility of thinking a far more pressing design challenge.

Designing for Visible Judgment

If artifacts alone no longer provide reliable access to student reasoning, then the central question for assessment design becomes straightforward, if not simple: Where does the student’s thinking actually appear?

One promising approach is to pair traditional artifacts with moments of visible reasoning. Students might record brief video explanations of how they approached a problem, narrating the choices and revisions that shaped their work. They might compare multiple AI-generated outputs and justify which one is strongest, thereby revealing the criteria guiding their judgment. They might submit prompt logs alongside written work and critique the responses they received, explaining how those outputs were refined, rejected, or reinterpreted. In each case, the artifact remains part of the assessment. But it is no longer the sole source of evidence. Instead, the artifact becomes one piece of a larger picture in which the student’s reasoning—once largely hidden—becomes visible.

The goal is not to prevent students from interacting with AI systems. Such tools will almost certainly remain embedded in professional and intellectual life. The goal is to ensure that the learner’s judgment remains visible within that interaction.

The Future of Evidence

In an era where answers can be generated instantly, the most interesting intellectual moments may no longer lie in the answers themselves. They lie in the moments when students hesitate, compare possibilities, revise an assumption, detect an error, or explain why one approach holds while another collapses under scrutiny. Those moments are where judgment lives. And if evidence of learning depends on evidence of thinking, then the future of assessment may depend less on the artifacts students produce and more on the reasoning they reveal along the way.

References

Ambrose, S. A., Bridges, M. W., DiPietro, M., Lovett, M. C., & Norman, M. K. (2010). How Learning Works: Seven Research-Based Principles for Smart Teaching.

Chi, M. T. H. (2009). Active–constructive–interactive: A conceptual framework for differentiating learning activities.

National Research Council. (2001). Knowing What Students Know: The Science and Design of Educational Assessment.

Ritchhart, R., Church, M., & Morrison, K. (2011). Making Thinking Visible.