The AI-Era Assessment Visibility Matrix

Where Is the Student’s Judgment Visible?

In a previous article, I explored the learning foundations that allow judgment to emerge. This model asks a related but different question: where does that judgment actually become visible in assessment?

For a long time, many forms of assessment in higher education relied on a simple but rarely articulated assumption:

If the artifact is strong, the thinking behind it must have been strong as well.

Essays, research papers, discussion posts, and summaries served as visible traces of invisible reasoning. Instructors read the final product and inferred the intellectual work that must have preceded it. If the argument was coherent, the explanation precise, or the analysis sophisticated, we assumed the student had done the thinking required to produce it.

For decades, this assumption functioned well enough that we rarely examined it directly.

Generative AI complicates that assumption.

When a polished artifact can be produced with little visible reasoning from the learner, the artifact itself stops telling us very much about how the work was produced. A well-structured essay may reflect careful thinking—or it may reflect effective prompting. The artifact remains, but the cognitive path that led to it becomes harder to see.

This shift does not mean that learning has disappeared. Nor does it mean artifacts themselves are no longer valuable. Writing, analysis, and explanation remain central intellectual practices.

But it does raise a deeper question about assessment design:

What actually counts as evidence of learning?

The Visibility Problem

Assessment has always involved interpretation. Instructors observe student work and draw conclusions about what students know and can do. But those interpretations are only as reliable as the evidence they are based on.

When artifacts reliably reflected student reasoning, this system worked reasonably well. But when artifacts become easier to generate independently of that reasoning, the inference becomes weaker.

In other words, the challenge introduced by generative AI is not simply technological.

It is epistemological.

If the artifact no longer reliably reveals the thinking that produced it, then instructors must ask a different question:

Where is the student’s judgment visible?

The AI-Era Assessment Visibility Matrix is one way of thinking about that question.

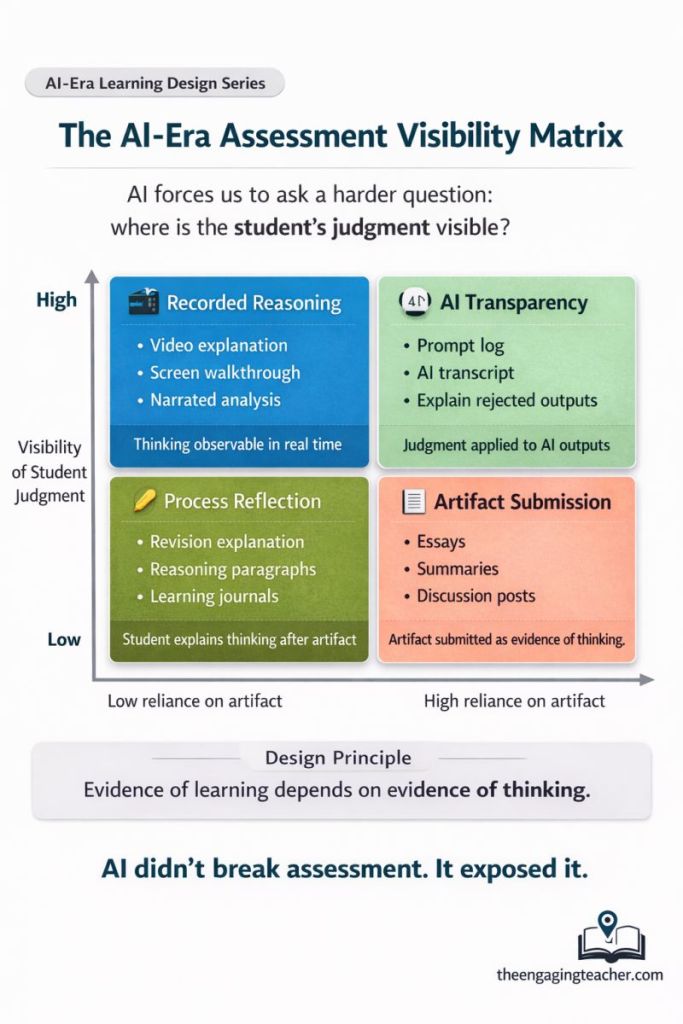

Figure 1. The AI-Era Assessment Visibility Matrix.

A framework mapping assessment types based on two dimensions: the visibility of student judgment and the degree to which the assignment relies on the artifact itself as evidence of learning.

Two Dimensions of Assessment

The matrix maps assessments along two dimensions.

The first dimension concerns the visibility of student judgment. Some assignments make the student’s reasoning observable in real time, while others leave that reasoning largely hidden.

The second dimension concerns the role of the artifact itself. Some assignments depend heavily on the artifact as proof of learning, while others rely more on the student’s explanation of how that artifact was produced.

Taken together, these dimensions produce four broad categories of assessment design.

Artifact Submission

In the lower-right quadrant are assignments that rely heavily on artifacts while making student reasoning minimally visible.

Examples include essays, summaries, discussion posts, and other forms of written submission where instructors evaluate the final product without seeing the reasoning process that produced it.

These forms of assessment have long been central to education. They remain useful for evaluating communication, synthesis, and argumentation. But when artifacts can be generated quickly by AI systems, their ability to serve as reliable indicators of student thinking becomes more limited.

The artifact alone no longer tells the full story.

Process Reflection

Moving leftward across the matrix, we encounter assignments that still involve artifacts but incorporate student reflection on the thinking process behind them.

Students might write revision explanations, describe their reasoning in accompanying paragraphs, or maintain learning journals that document how their ideas developed over time.

These activities do not eliminate artifacts, but they introduce an additional layer of evidence. The student’s reasoning becomes partially visible through reflection.

While reflection occurs after the artifact is produced, it still provides instructors with insight into the cognitive process behind the work.

Recorded Reasoning

In the upper-left quadrant are assessments that make reasoning visible during the thinking process itself.

Examples include video explanations, screen walkthroughs, and narrated analyses in which students explain how they approached a task while completing it.

Here the focus shifts from evaluating the final artifact to observing the reasoning that generates it. Instructors gain access to the student’s decision-making process: how they interpret prompts, weigh alternatives, revise ideas, and connect concepts.

These moments—often fleeting in traditional assessment—become visible evidence of thinking.

AI Transparency

The upper-right quadrant reflects a newer category of assessment emerging in AI-mediated environments.

Here students explicitly document and analyze their interactions with AI systems. They might submit prompt logs, transcripts of AI conversations, or explanations of why certain AI outputs were accepted, revised, or rejected.

Rather than hiding the presence of AI tools, these assignments make the student’s judgment about AI outputs visible.

In this context, the important evidence is not simply the artifact produced but the student’s ability to evaluate, critique, and refine the outputs generated by the system.

Evidence of Thinking

Across these quadrants, one design principle emerges clearly:

Evidence of learning depends on evidence of thinking.

Artifacts remain valuable expressions of learning. But in environments where artifacts can be generated with increasing ease, the artifact alone provides less insight into the student’s reasoning.

Assessment design must therefore evolve to surface the moments where students evaluate ideas, compare alternatives, revise assumptions, and explain their choices.

Those moments are where judgment lives.

Learning Science Foundations

The visibility problem highlighted by generative AI echoes insights from decades of learning science.

The Assessment Triangle proposed by the National Research Council (2001) emphasizes the relationship between cognition, observation, and interpretation in assessment design. Effective assessment requires clarity about what students should know, tasks that reveal that knowledge, and careful interpretation of the resulting evidence.

Research on visible thinking and generative learning similarly demonstrates that students deepen understanding when they articulate their reasoning and reflect on their thought processes (Chi, 2009; Ambrose et al., 2010; Ritchhart et al., 2011).

Taken together, these strands of research suggest a consistent principle:

Learning becomes assessable when thinking becomes observable.

Generative AI has not introduced this principle, but it has made it far more urgent.

The Design Question

In an era where answers can be generated instantly, the most important moments in learning may no longer be the answers themselves.

They are the moments when students compare possibilities, detect errors, revise ideas, and explain why one approach is stronger than another.

Those moments reveal judgment.

And if evidence of learning depends on evidence of thinking, then the future of assessment may depend less on the artifacts students produce and more on the reasoning they make visible along the way.

References

Ambrose, S. A., Bridges, M. W., DiPietro, M., Lovett, M. C., & Norman, M. K. (2010). How Learning Works: Seven Research-Based Principles for Smart Teaching.

Chi, M. T. H. (2009). Active–constructive–interactive: A conceptual framework for differentiating learning activities.

National Research Council. (2001). Knowing What Students Know: The Science and Design of Educational Assessment.

Ritchhart, R., Church, M., & Morrison, K. (2011). Making Thinking Visible.