The SIGNAL Lens for Trustworthy Judgment in an AI-Mediated World

We have a trust problem.

For a long time, our systems worked because we believed the work people produced reflected their thinking.

That assumption just broke.

We are now trying to evaluate thinking using evidence that no longer reliably represents it.

And that changes everything.

Introduction: Logic and the Hidden Ethos

There’s a moment I keep coming back to.

Somewhere along the way, we learned to trust language as evidence of thinking. Not just in school, but everywhere—papers, presentations, reports, conversations. If someone could explain something clearly, structure an argument, use the right words in the right order, we took that as a signal of understanding.

It made sense. Language has always functioned as a kind of logic. A well-formed explanation suggested a well-formed thought. Ethos, pathos, logos—they weren’t just rhetorical tools; they were ways of revealing how someone was thinking.

But that connection was never guaranteed. It was inferred.

And now, for the first time at scale, that inference is breaking.

We can generate language that sounds thoughtful without engaging in the same level of thinking that language once represented. We can produce structure without fully constructing understanding. We can assemble arguments that hold together on the surface without having worked through them in any meaningful way.

The issue isn’t that logic has disappeared.

In many ways, we have more access to it than ever.

The issue is that language—our primary proxy for logic—is no longer a reliable signal of how that logic was formed.

And when the signal weakens, something deeper starts to surface.

Not a problem of intelligence.

Not a problem of capability.

A problem of ethos.

A problem of trust.

I. The Break: When Artifacts Stop Carrying Signal

For a long time, we’ve relied on a simple, mostly unspoken assumption: if the work is good, the thinking behind it is probably good too. A well-structured essay, a correct solution, a polished report—these have served as reasonable stand-ins for understanding. Not perfect representations, but close enough that we could make meaningful judgments without constantly second-guessing the connection.

That assumption didn’t come from nowhere. It’s rooted in how assessment has always worked. We can’t observe thinking directly, so we observe what people produce and infer what must have happened cognitively. The National Research Council formalized this as a relationship between cognition, observation, and interpretation. The artifact—the essay, the answer, the deliverable—has been the observable bridge between what we want to know and what we can actually see.

At the same time, fields like education have spent years trying to strengthen that bridge. Work in visible thinking, associated with Ron Ritchhart and others, pushed us to make reasoning more explicit so we wouldn’t have to rely so heavily on inference. The goal wasn’t to eliminate the artifact, but to make it more transparent—to ensure that what we were seeing more accurately reflected how someone was thinking.

All of this worked reasonably well under one condition: that the artifact still carried a meaningful signal of the thinking behind it.

That condition is changing.

With generative AI, it’s now possible to produce work that looks thoughtful, structured, and even insightful without engaging in the same level of reasoning that work was once assumed to represent. This doesn’t mean people aren’t thinking. It means the relationship between what is produced and what has been thought through is no longer reliable in the same way.

It’s tempting to interpret this as a breakdown in integrity, but that framing misses what’s actually shifting. The issue isn’t primarily that people are trying to get around the system. It’s that the system itself depends on a kind of evidence that has become less stable.

What we’re experiencing is a change in signal.

For years, the artifact functioned as a relatively stable proxy. You could look at a piece of work and, while allowing for some variation, trust that it reflected a corresponding level of understanding. Now, that same level of polish can emerge from very different cognitive processes—or from very little sustained reasoning at all.

We’re trying to evaluate thinking using evidence that no longer reliably represents it.

That doesn’t mean artifacts are useless. It means they are no longer decisive on their own. The same output can carry very different meanings depending on how it came to be, and that “how” is increasingly difficult to see.

This is the break.

It’s not dramatic in the sense that everything has collapsed. Work still gets produced. Learning still happens. But the quiet agreement that used to exist between what we saw and what we inferred is no longer as secure as it once was. And when that agreement starts to loosen, the entire structure built on top of it—how we assess, how we evaluate, how we trust what we’re seeing—becomes harder to hold steady.

The question now isn’t whether the work is good. It’s whether the work tells us anything we can trust about the thinking behind it.

Alongside this shift, a growing concern has started to surface across education: that students are completing work without developing the underlying cognitive skills the work was meant to build. Instructors describe assignments that look strong on the surface but fall apart under questioning. Students can produce explanations, but struggle to adapt, transfer, or defend their reasoning when conditions change. The concern isn’t simply that AI is being used—it’s that something more foundational may be weakening. If the primary evidence we rely on no longer reflects the thinking it was designed to signal, then we lose more than accuracy in grading. We lose visibility into whether learning is actually happening. And without that visibility, it becomes difficult to distinguish between performance and understanding, between completion and capability.

II. This Isn’t Just Education

This shift isn’t confined to classrooms.

In professional settings, the same pattern is already taking shape—just with different language. Reports come together faster. Analyses are drafted, revised, and polished with assistance. Strategic recommendations can be assembled in a fraction of the time they once required. In many cases, the quality of outputs is improving.

But something more subtle is happening alongside that improvement.

The relationship between the output and the reasoning behind it is becoming harder to interpret.

In a workplace context, that ambiguity doesn’t show up as an academic integrity issue. It shows up as uncertainty: Who actually understands this? Who can defend these decisions under pressure? Who can adapt when the situation shifts? A clean deliverable no longer guarantees that the thinking behind it is equally strong—or even present in the way we assume.

At the same time, organizations are actively moving in this direction. Skills-based hiring is replacing credential-based filtering. Learning and development is shifting toward continuous upskilling. AI is being integrated into everyday workflows as a tool for augmentation, efficiency, and scale. Across sectors, productivity is increasing and access to high-quality outputs is expanding.

All of this is, in many ways, a success story.

But it also introduces a tension that isn’t being addressed directly.

What evidence do we have of how people are thinking?

That question sits underneath hiring decisions, performance evaluations, leadership development, and training outcomes. It’s not new, but it’s becoming more difficult to answer using the signals we’ve traditionally relied on.

As outputs become easier to produce, judgment becomes harder to see.

What we’re encountering, across both education and the workplace, is not just a shift in tools or workflows. It’s a shift in what counts as evidence. Systems that were built to evaluate performance through observable outputs are now operating in an environment where those outputs are less tightly coupled to the cognitive processes we care about.

This is why the problem doesn’t stay contained within education.

It reflects a broader change in how human capability is expressed, supported, and evaluated under conditions of automation.

And if the relationship between output and thinking is no longer stable, then any system that depends on that relationship—classrooms, hiring processes, professional learning, leadership pipelines—has to reconsider how it makes judgments in the first place.

III. From Artifacts to Signals: A Shift in Evidence

We Don’t Need Better Products. We Need Better Signals.

If artifacts are no longer stable, improving the artifact won’t solve the problem.

It’s a natural response to try. We refine assignments. We clarify expectations. We tighten rubrics or restrict tools. Each of these moves is an attempt to restore confidence in what we’re seeing—to make the product, once again, feel like reliable evidence of thinking.

But underneath those efforts is an assumption that no longer holds: that if we design the product well enough, it will faithfully reflect the reasoning behind it.

That assumption depends on a stable relationship between performance and cognition. When that relationship weakens, no amount of refinement to the artifact can fully restore it.

So the question has to shift.

Not: Is the work good?

But: What evidence do we have of the thinking behind it?

Assessment has always been inferential. We observe what someone does and interpret what it suggests about how they’re thinking. The National Research Council describes this as the relationship between cognition, observation, and interpretation. When the observable evidence maps cleanly onto the underlying thinking, the inference is relatively strong. When that mapping becomes uncertain, the inference weakens—even if the performance itself appears strong.

This is where the artifact begins to lose its role as a primary anchor.

Instead of interpreting thinking from a single product, we must begin interpreting signals—patterns of reasoning that emerge across time, context, and constraint. Thinking is no longer inferred from what is produced once, but is inferred from how judgment holds across:

- decisions made under constraint

- revisions across time

- explanations of tradeoffs

- performance under changing conditions

- the ability to critique, adapt, and transfer

In this model, no single artifact is sufficient; taken together, these signals can form something stronger: A pattern of judgment that is observable, interpretable, and increasingly trustworthy. If a single product can no longer carry enough signal on its own, then we need to look elsewhere—not for a replacement, but for a broader base of evidence.

What begins to emerge is a different approach to evaluation, one that relies on converging signals rather than a single output. Instead of asking whether a finished product proves understanding, we start to pay attention to the patterns that surround it: the decisions someone makes and how they defend them, the alternatives they consider and set aside, the way their work changes over time, and whether their reasoning holds up when the context shifts.

None of these signals is complete on its own. A reflection can be rehearsed. A revision history can be superficial. A strong explanation in one moment doesn’t guarantee consistency in another. But when these signals begin to align—when the choices, changes, and justifications tell a coherent story—they create something more stable than any single artifact can provide.

In professional education and workplace learning, this kind of triangulation is already taking shape. Evaluation models increasingly look across person, process, and product rather than relying on output alone. The same logic applies here, but with greater urgency.

Because the issue is no longer just incomplete evidence.

It is unstable evidence.

And in that context, trust doesn’t come from the product itself.

It comes from the pattern of evidence surrounding it.

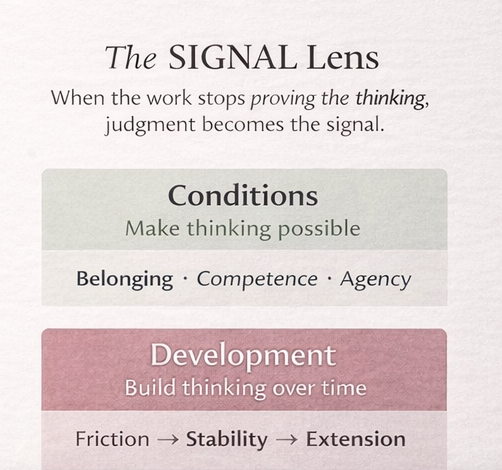

IV. The SIGNAL Lens: Designing for Trustworthy Judgment

The SIGNAL Lens is a framework for designing environments where human judgment remains visible and trustworthy—even in contexts where outputs can be generated with increasing ease.

It doesn’t begin from the assumption that AI is the problem to be eliminated, nor does it treat artifacts as inherently meaningless. Both remain part of the landscape. What changes is how we interpret them, and what we’re willing to treat as sufficient evidence.

At its core, the SIGNAL Lens is a shift in attention.

Instead of asking whether the final product reflects understanding, it asks what kinds of evidence allow us to make a credible judgment about the thinking that produced it. That distinction matters, because once the artifact loses its stability as a proxy, we need a broader and more deliberate way of seeing.

The framework organizes that work across three interdependent layers.

The first is conditions—the environment in which thinking takes place, including whether people feel able to test ideas, revise their thinking, and take intellectual risks. The second is development—how judgment forms over time, including the kinds of support that build or bypass it. The third is signals—the observable traces of thinking that allow us to interpret what someone understands, how they reason, and how their thinking evolves.

Each of these layers can be examined on its own, but they are not independent in practice.

The conditions shape whether thinking is expressed honestly. Development shapes whether that thinking is actually formed. Signals shape whether we can see it at all. When any one of these breaks down, the others become harder to interpret.

Each layer matters—but more importantly, each layer determines whether the others can be trusted.

The SIGNAL Lens doesn’t offer a single method or tool. It offers a way of designing and interpreting evidence under conditions where the old assumptions no longer hold.

Earlier frameworks helped us make thinking visible. The SIGNAL Lens responds to a new condition—where visible products no longer reliably represent thinking—and focuses on designing for trustworthy signals of judgment across time and context.

V. Layer 1 — Conditions: Signal Integrity

Thinking requires risk.

Not just the cognitive effort of working through a problem, but the interpersonal risk of doing so visibly—admitting uncertainty, testing incomplete ideas, changing direction, and, at times, being wrong in front of others. These are not edge cases. They are the ordinary conditions under which real understanding develops.

Whether those behaviors show up depends less on the task itself and more on the environment surrounding it.

Research on psychological safety and motivation—particularly the work of Amy Edmondson and Edward Deci—has consistently shown that people engage more deeply when they experience a sense of safety, competence, and agency. When individuals believe they can contribute without being penalized for uncertainty, when they see themselves as capable of improving, and when their decisions carry weight, they are more likely to participate in the kind of thinking that leads to growth.

Three conditions are foundational:

- Belonging → “I am safe to take intellectual risks.”

- Competence → “I can improve through effort and feedback.”

- Agency → “My decisions matter, and I am responsible for them.”

People need to feel that they can think out loud without immediate judgment. They need to believe that their effort can lead to improvement. And they need to experience their choices as meaningful rather than procedural. These aren’t abstract ideals; they are the conditions that determine whether someone will actually engage with a problem or simply move around it.

I refer to this as thinking safety: the condition in which individuals can express, revise, and test their thinking without penalty, distortion, or premature evaluation. Without thinking safety, the signals we observe are not just incomplete—they are unreliable. We cannot make thinking visible if it is not first safe to reveal.

These conditions are often mischaracterized as soft, as if they stand in opposition to rigor. In reality, they make rigor possible. Productive struggle depends on a willingness to stay with uncertainty long enough to work through it. Without that willingness, difficulty doesn’t produce learning—it produces avoidance.

When the conditions are weak, a predictable pattern emerges. People begin to perform certainty rather than explore uncertainty. They defer to authority—or increasingly, to AI—rather than exercising their own judgment. They focus on completing the task in a way that meets expectations, rather than understanding what the task is asking of them.

When these conditions are absent, what appears as “thinking” may instead be:

- compliance

- mimicry

- or delegation to external systems

In AI-mediated environments, this distinction becomes critical. AI can generate outputs, but it can’t generate ownership of judgment.

If the conditions for ownership are not present, the signals we observe cannot be trusted.

Conditions don’t just shape whether thinking happens. They shape what we are able to see of that thinking. If the environment discourages revision, penalizes visible uncertainty, or rewards only polished outcomes, the signals we observe will reflect those pressures. They may look coherent, even impressive, but they will be filtered through a set of constraints that distort what is actually happening cognitively.

Conditions do not just affect whether thinking happens. They affect whether the signals of thinking are honest, strong, and interpretable.

You can design a task that appears to invite deep reasoning. But if the surrounding environment makes it risky to show that reasoning in progress—to change one’s mind, to question an approach, to surface confusion—then what you observe will be a performance shaped by those constraints.

Visible thinking, under those conditions, is not necessarily trustworthy thinking.

And if the signals themselves are distorted, no amount of refinement in the task will fully resolve the problem.

VI. Layer 2 — Development: Structural Stability

Not all support builds thinking.

In the early stages of learning, structure matters. Effort matters. Guided struggle matters. Decades of work on cognitive load and expertise development, particularly by John Sweller, alongside research on desirable difficulties from Robert Bjork, point to the same underlying dynamic: internal models don’t form through ease. They form through effortful processing—through the act of working something out, not simply arriving at an answer.

This is the stage where learners begin to develop a sense of what counts as quality, what alternatives are available, and how different choices shape outcomes. It’s slow, sometimes inefficient, and often uncomfortable. But it’s also where judgment begins to take shape.

Generative AI introduces a complication at precisely this point.

It allows learners to move quickly from prompt to product, often skipping over the intermediate decisions that would normally require them to evaluate, compare, and revise. The output may look complete, even sophisticated, but the underlying structure—the internal sense of why something works or doesn’t—may not have been built in the same way.

The result is becoming increasingly familiar across contexts:

strong performance, weak understanding.

To describe this more precisely, it helps to introduce the idea of a Structural Stability Point (SSP).

A learner is approaching this point when they can, with some independence, make and evaluate decisions about their own work. They can recognize when something is strong or weak, consider alternatives rather than defaulting to the first solution, and explain why they’ve changed direction when they revise. These are not surface-level skills. They reflect an emerging internal structure that allows someone to think with the material, not just produce it.

Before this point, support plays a very specific role. It should be doing the work of building that structure. That means introducing constraints, prompting comparison, and requiring justification. It often means preserving a certain amount of friction—not as a barrier, but as part of formation. When learners have to decide, not just execute, they begin to internalize the criteria they will later use independently.

After this point, the role of support can change. Once a learner has a stable sense of how to evaluate and adjust their own thinking, tools that reduce effort can become productive. Automation can free up attention, allowing them to focus on more complex problems, broader applications, or higher-order judgment.

This is where the timing of AI becomes critical.

Early automation can bypass structure formation.

Later automation can extend judgment.

The distinction isn’t about whether AI is present. It’s about what kind of thinking the environment is requiring at a given stage. When automation replaces the very decisions that build internal models, it weakens development. When it supports decisions that are already grounded in understanding, it can amplify capability.

So the question is not whether AI should be used.

It’s how it is sequenced within the process of learning.

Because if judgment hasn’t been formed, there is very little for AI to extend.

VII. Layer 3 — Signals: What We Can Trust

Even when thinking is possible and actively developing, a central challenge remains:

How do we see it?

Not in a single submission, and not in a finished product that appears complete on its own. What we’re looking for isn’t contained in one moment or one artifact. It emerges across patterns.

Trustworthy inference comes from converging signals of judgment—evidence that accumulates across different kinds of engagement with a problem. Some of these signals are explicit: what learners say about their thinking, how they explain their decisions, how they describe what changed and why. Others appear in real time, in the ability to respond to questions, defend a choice, or adjust an approach when prompted. Still others are embedded in the work itself, not as a final product, but as a record of change—drafts, revisions, annotations that show how thinking has evolved rather than simply where it landed.

There are also signals that emerge when conditions shift. When a task is moved into a different context, when support is reduced, or when time is constrained, what holds—and what doesn’t—reveals something about the underlying structure of understanding. The ability to compare alternatives, to distinguish between stronger and weaker approaches, and to recognize errors as meaningful rather than incidental adds another layer. So does calibration: how well someone can assess their own thinking, align confidence with performance, and articulate what they would do differently next time.

None of these signals is sufficient on its own. A reflection can be rehearsed. A strong explanation in one moment can mask gaps in another. A series of revisions can reflect surface changes rather than conceptual shifts. But when these signals begin to align—when what someone says about their thinking matches what they can do, how their work changes, and how they respond under variation—they form a pattern that is far more stable than any single artifact.

Trustworthy judgment doesn’t reveal itself in one product. It becomes visible in the coherence of signals across time.

This is the shift.

We are no longer asking an artifact to carry the full weight of inference. We are interpreting a body of evidence—distributed, varied, and increasingly necessary if we want to understand how someone is actually thinking.

IX. Implications: This Is a Design Problem

A common concern is that this kind of assessment requires too much time.

It doesn’t.

What it requires is a different structure.

When we rely on a single submission to carry the full weight of evaluation, we create pressure for that moment to do too much. The artifact becomes a high-stakes proxy, expected to represent not just performance, but understanding, reasoning, and capability all at once. When that proxy becomes unstable, the instinct is to double down—more detailed rubrics, more constraints, more scrutiny of the final product.

But the issue isn’t that we need more time to inspect the artifact more closely.

It’s that we need more opportunities to see thinking in motion.

Trustworthy judgment doesn’t reveal itself in a single instance. It emerges across moments: in early drafts that show initial assumptions, in revisions that reflect shifts in thinking, in discussions where ideas are tested and clarified, in short defenses where reasoning has to hold up in real time, and in applied tasks where understanding is carried into a new context.

Each of these moments offers a different angle. None is complete on its own, but together they begin to form a pattern. What matters is not that every moment is captured, but that enough variation exists to see how thinking behaves under different conditions.

This is not surveillance.

It is distributed evidence.

The goal is not constant observation, nor is it to document every step a learner takes. It is to design environments where thinking appears in more than one place, in more than one form, so that no single artifact has to carry the full burden of interpretation.

We don’t need constant observation.

We need multiple opportunities to see thinking under different conditions.

When those opportunities are built into the structure of learning, assessment becomes less about extracting proof from a final product and more about recognizing patterns that develop over time.

If the problem is inference, the solution is design.

What’s shifting here isn’t just a toolset or a policy question—it’s the underlying logic of how we evaluate thinking. If the evidence we’ve relied on becomes unstable, then improving enforcement or refining outputs won’t resolve the issue. We have to change how evidence is generated in the first place.

That begins with design.

For educators and learning designers, this means moving beyond models that rely primarily on finished products and toward structures that make thinking visible across multiple moments. Tasks need to do more than prompt completion; they need to require comparison, justification, and revision in ways that can’t be reduced to surface-level performance. This also means being more intentional about how AI is introduced. If tools are positioned as replacements for early decision-making, they can bypass development. If they are integrated after learners have begun to form judgment, they can extend it. The difference is not in the tool itself, but in how it is sequenced within the learning process.

For tools and platforms, the implication is just as significant. Much of the current response to AI has focused on detection—trying to determine whether a tool was used, or how much of a product was generated. But detection doesn’t address the core issue. It doesn’t tell us how someone is thinking, only whether a particular pathway was taken. A more productive direction is to support forms of process visibility that help make thinking interpretable without turning that visibility into surveillance. This includes privileging trace evidence—how work changes over time—over static outputs, and designing interfaces that allow learners to annotate, explain, and make sense of their own process rather than simply being monitored.

For leaders and organizations, the shift is broader. Many systems—hiring processes, performance evaluations, professional development—are built on the assumption that outputs can stand in for capability. As that assumption weakens, the question becomes how to design evaluation systems that can still support sound judgment. This requires moving away from a detection mindset and toward a design mindset: not asking how to catch misuse, but how to create conditions where meaningful evidence of thinking is more likely to appear. It also requires recognizing that trust in learning and performance has always been an inference problem. Technology can change the conditions under which that inference happens, but it doesn’t eliminate the need for it.

Across all of these contexts, the implication is the same.

We are not simply adjusting to new tools.

We are redesigning how we know what someone understands.

X. From Visible Thinking to Trustworthy Judgment

For a long time, we’ve trusted language as evidence of thinking.

If someone could explain something clearly, structure an argument, use the right terms in the right way, we took that as a signal that the thinking behind it was sound. Logos carried weight. It gave us something to point to, something to evaluate, something to trust.

That trust was never perfect. But it was workable.

What’s changed is not our access to logic. In many ways, we now have more of it than ever. Explanations are easier to generate. Arguments can be assembled quickly. Language can be shaped into coherence with very little friction.

What’s changed is our ability to trust what that language represents.

The same explanation can now emerge from very different levels of understanding. The same argument can be produced with or without the underlying reasoning that once gave it meaning. The proxy has loosened.

And when that happens, the problem is no longer just epistemic.

It becomes relational.

Across classrooms, workplaces, and organizations, the question underneath everything else is starting to surface more clearly:

What can we trust about how people are thinking?

Not just what they produce, but how they arrive there. Not just whether something sounds right, but whether it reflects judgment we can rely on.

This is where ethos returns—not as a rhetorical device, but as a condition.

Because when logos can be generated, and pathos can be performed, what remains is the need for trust in the thinker.

That kind of trust doesn’t come from a single artifact. It doesn’t come from a polished explanation or a well-constructed response. It emerges over time, through patterns that hold together across moments, contexts, and conditions.

It is built when:

- people can show their thinking without penalty

- revise without being reduced by it

- make decisions and explain them

- be wrong, and then be better

In other words, it is built relationally. Because thinking is not just cognitive but also situational, and what we can trust depends on the conditions under which it is revealed.

The SIGNAL Lens is one way of designing for that.

Not by replacing artifacts, but by repositioning them within a broader set of signals. Not by removing AI, but by clarifying what kind of thinking must still be human. Not by tightening control, but by strengthening the conditions under which trust can form.

Because the goal was never the work.

It was never just the explanation, the answer, or the output.

It was the thinking—and our ability to recognize it, respond to it, and rely on it.

And if that ability is no longer guaranteed by the artifacts we see, then it has to be rebuilt somewhere else.

Not just in our tools.

Not just in our assessments.

But in the relationships that allow thinking to be seen, taken seriously, and trusted.

That is the shift.

Not just from visible thinking to trustworthy judgment.

But from evaluating outputs

to designing for trust.

References

Core Learning & Assessment Theory

National Research Council. (2001). Knowing what students know: The science and design of educational assessment. National Academies Press.

Ron Ritchhart, R., Church, M., & Morrison, K. (2011). Making thinking visible: How to promote engagement, understanding, and independence for all learners. Jossey-Bass.

Motivation, Agency, and Conditions (Layer 1)

Amy Edmondson, A. C. (1999). Psychological safety and learning behavior in work teams. Administrative Science Quarterly, 44(2), 350–383.

Edward Deci, E. L., & Ryan, R. M. (2000). The “what” and “why” of goal pursuits: Human needs and the self-determination of behavior. Psychological Inquiry, 11(4), 227–268.

Learning Science & Development (Layer 2)

John Sweller, J. (1988). Cognitive load during problem solving: Effects on learning. Cognitive Science, 12(2), 257–285.

Robert Bjork, R. A., & Bjork, E. L. (2011). Making things hard on yourself, but in a good way: Creating desirable difficulties to enhance learning. In Psychology and the real world.

Anders Ericsson, K. A., Krampe, R. T., & Tesch-Römer, C. (1993). The role of deliberate practice in the acquisition of expert performance. Psychological Review, 100(3), 363–406.

Workplace Learning & Reflection

Donald Schön, D. A. (1983). The reflective practitioner: How professionals think in action. Basic Books.

David Kolb, D. A. (1984). Experiential learning: Experience as the source of learning and development. Prentice-Hall.

Judgment & Decision-Making

Daniel Kahneman, D. (2011). Thinking, fast and slow. Farrar, Straus and Giroux.

AI & Assessment Context

José Antonio Bowen, J. A., & Watson, C. E. (2024). Teaching with AI: A practical guide to a new era of human learning. Johns Hopkins University Press.

Stanford Institute for Human-Centered AI. (2025). AI Index Report 2025.

Professional / Business Education (Signals + Convergence)

AACSB International. (2020–2023). Guiding principles and standards for business accreditation (person–process–product emphasis).

More to come.

Leave a Reply