Why visibility isn’t enough—and what we can actually trust now

For years, we’ve been told to make thinking visible.

It’s good advice. It’s grounded in learning science. It improves instruction.

But in an AI-mediated world, visibility is no longer enough.

Because now, thinking can be simulated.

Students can generate work that looks like reasoning.

They can produce explanations, arguments, and reflections that appear coherent and complete.

And often, we can’t tell—just by looking at the final product—what actually happened.

That creates a new problem.

Not a design problem.

A detection problem.

The Assessment Shift

We’ve traditionally treated finished work as evidence of learning.

An essay, a project, a discussion post—these were assumed to reflect the thinking behind them.

That assumption no longer holds.

The product is no longer the evidence.

Capability is.

And capability doesn’t live in the final output.

It shows up in something else.

From Evidence to Signals

In stable conditions, artifacts can function as evidence.

But in environments where outputs can be generated, refined, or optimized with external support, artifacts become unstable indicators of thinking.

What we have instead are signals.

Not everything in student work is equally meaningful.

Some elements reflect real judgment. Others can be produced without it.

So the question shifts from:

What did the student produce?

to:

What signals of capability are present—and which of those can we actually trust?

Not All Signals Are Equal

This is the gap that often gets missed.

We can make thinking visible.

We can ask for explanations, reflections, and process.

But visibility alone does not guarantee credibility.

Some visible thinking is:

- reconstructed after the fact

- prompted into existence

- or loosely connected to actual decisions

In other words, it looks like thinking—but doesn’t reliably signal capability.

If we stop here, we risk replacing one proxy (the product) with another (the explanation).

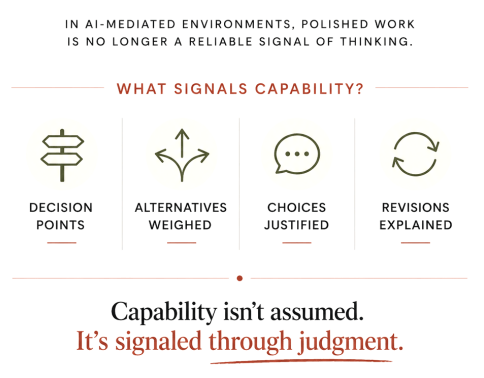

Trustworthy Signals of Capability

To move forward, we need to define which signals actually indicate capability under real conditions.

Across contexts, four patterns consistently emerge as trustworthy signals:

1. Decision Points

Moments where a meaningful choice had to be made

These are points of constraint.

Something could have gone multiple ways—and didn’t.

2. Alternatives Weighed

Evidence that multiple paths were considered

Capability shows up in comparison, not just selection.

The presence of rejected options matters.

3. Choices Justified

Reasoning behind decisions

Not just what was chosen, but why—including tradeoffs, priorities, and constraints.

4. Revisions Explained

Changes connected to purpose, feedback, or new understanding

Revision is not just editing.

It is visible adaptation of thinking.

These signals share a common trait:

They are difficult to produce convincingly without engaging in actual judgment.

They anchor our inference in moments where thinking had to occur.

The SIGNAL Lens, Extended

This work builds on the SIGNAL Lens, which focuses on making thinking visible through:

- Selection

- Intent

- Generation

- Negotiation

- Analysis

- Learning

But as the landscape shifts, SIGNAL alone is not sufficient.

We now need a second layer:

A way to evaluate the trustworthiness of what becomes visible.

That is where Trustworthy Signals of Capability operates.

- SIGNAL → makes thinking observable

- Trustworthy Signals → filters for what is credible

Together, they move us from:

- visibility → valid inference

Design, Development, Detection

This also clarifies a broader structure for learning design:

Design for Judgment

Create tasks where students must make meaningful decisions

(DECIDE Framework)

Develop Capability

Support learners until they can make those decisions independently

(Structural Stability Point)

Detect Trustworthy Signals

Identify where capability is actually demonstrated

Without this third layer, we risk designing strong tasks and supporting learning—without being able to confidently interpret the results.

What This Means in Practice

This is not about adding more work.

It is about shifting attention.

Instead of asking:

- Is the product complete?

- Does this look correct?

We begin asking:

- Where are the decision points?

- What alternatives were considered?

- What reasoning shaped this choice?

- What changed, and why?

These questions can show up in:

- assignment design

- feedback conversations

- grading criteria

- student self-reflection

The goal is not to make all thinking visible.

Not all thinking should be visible.

But assessment requires designed access to it.

A New Basis for Trust

In any assessment system, we are making inferences.

We are deciding what counts as credible evidence of capability.

In the past, we relied heavily on the product.

Now, we need to rely on something else.

Capability isn’t assumed.

It’s signaled through judgment.

And judgment becomes visible through specific, interpretable signals.

Where This Goes Next

This is not a finished model.

It is a starting point for a larger shift:

From:

- artifact-based evaluation

To:

- signal-based inference under conditions of uncertainty

That shift matters not just in classrooms, but in:

- hiring

- professional learning

- leadership development

Anywhere outputs can be produced without guaranteed understanding.

A Practical Tool

If you’re looking to apply this immediately, I’ve created a two-page tool that translates these ideas into usable prompts for instruction, feedback, and assessment.

You can download it here:

Final Thought

We don’t just need better assignments.

We need better ways to recognize capability when we see it.

Because in this era, what looks like thinking is no longer enough.

We have to know what to trust.

Leave a comment